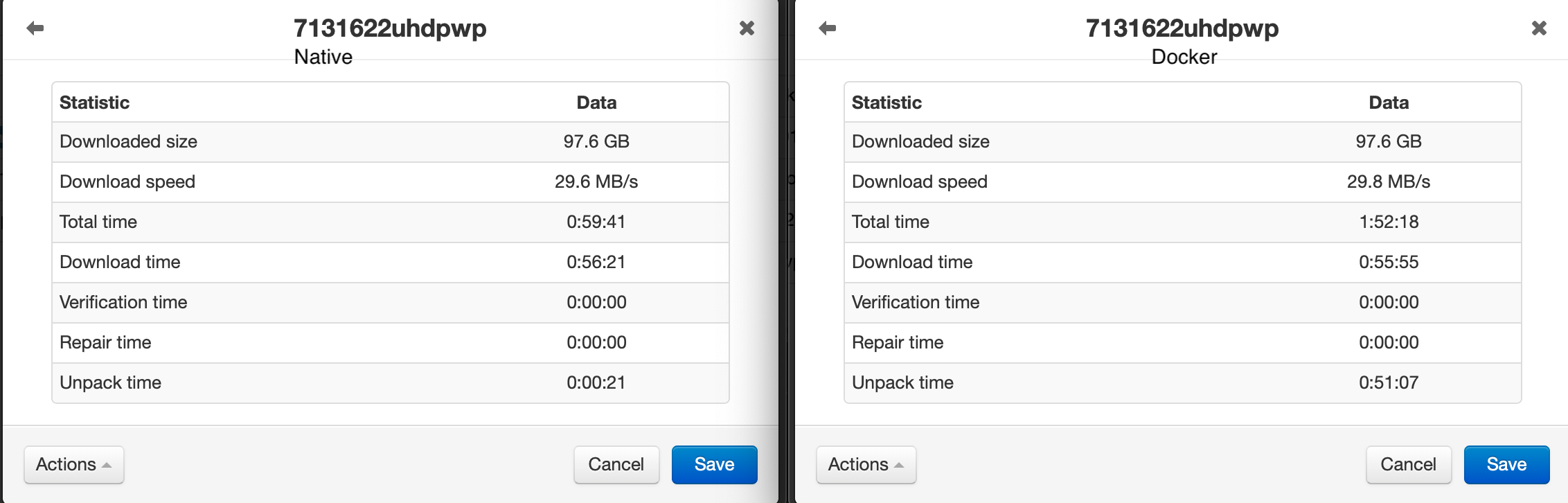

You can make things complicated, or if you really want speed, you can go real raid (unraid and snapraid, and I think Synology just fill disk by disk and calculate parity, raid writes to all disk), maybe with a cache SSD, but either the disks are fast enough, or your cache will fill up.īut how often does it happen there is something you want to watch now, and you have to download it? Sure, it has happened to me, but I wouldn't build my system around that. In the end, I guess your data ends up on a mechanical drive, that will be the limiting factor. So, unless your connection is faster than 1gbps, or you are streaming from your disk while copying to it, a good brand SSD to download to and good hdd to extract to should be fine.

Even with 66% overhead, this allows you to Max out a 1gbps connection easy.Ī quick look at wd red drives shows sequential write speed (what unrars should be) of 150-250MB, also faster than 1gbps. Let's say you download to an SSD, and extract from it at the same time, that's still 3gbps to or from the disk. Sata 3 can do 6 gbps, and many modern quality drives max out that speed, being the reason nvme is popular. Is extracting speed really that important? And what type of connection do you even have? This includes hacking, using a loophole, or other methods not publicly advertised by the usenet provider. No promoting of 'backdoor' access into usenet providers' networks. We do not allow attempts to request/offer/buy/sell/trade/share invites or accounts. We will even add flair to your username after verification. Message the mods and let them know who you are. However we want to verify the identity of anyone posting on behalf of a company/project. No discussion of specific media content names, titles, etc. We only have a few, but they are important. Please read over the rules before contributing.

We are a thriving community dedicated to helping users old and new understand and use usenet. I am just wondering because it worked fine for X number of months and then just started doing this out of the blue.Welcome to the usenet subreddit. So question to dev group, did you update any version of the base image in the last month or so? Apparently this is an issue with the version of Mono? Thanks eroz, I changed that setting, hopefully it helps. I switched from using the Drone Factory to Completed Download Handling and I have not had this issue anymore. I was having the same issue and for a long time I also thought it was NzbGet. These files are coming from different sites and the files are verified working (2nd, 3rd or 4th time is the charm).Īny ideas would be appreciated, I have no idea what is going on.ĭo you use Sonarr? If so are you using Drone Factory or Completed Download Handling for the file renaming? This was happening a solid 5-6 times a week and a week ago I thought it went away (despite it not mattering, or shouldn't, I saw my docker image was almost full so I recreated it and the issue seemed to go away for a week. So first thought was ovbiously the file/host but if I regrab the same file it gives all the same results (aka 100% download), but thee second or third time it is now the correct size and everything works fully. Watching the video in any player cuts out at the same point. NZBGet is showing the file fully downloads and uncompresses, but the uncompressed file is smaller than it should be. Figure I would try here as well as NZBGet and my newshosting sites.įor about 3 weeks now, I will download files and the video cuts out after about 30 minutes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed